The training time of a machine learning model depends on several factors, such as the complexity of the model, the size of the data, the hardware resources, and the hyperparameters. To minimize the training time without significantly compromising the accuracy of the model, one should optimize these factors as much as possible.

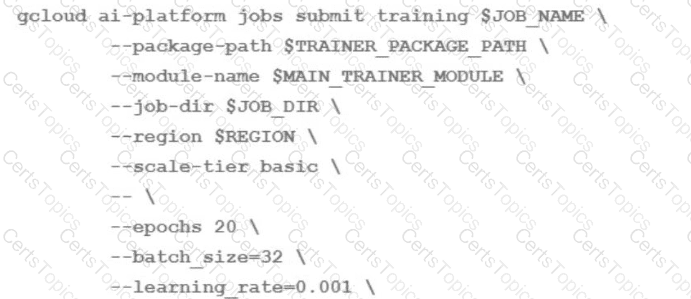

One of the factors that can have a significant impact on the training time is the scale-tier parameter, which specifies the type and number of machines to use for the training job on AI Platform. The scale-tier parameter can be one of th e predefined values, such as BASIC, STANDARD_1, PREMIUM_1, or BASIC_GPU, or a custom value that allows you to configure the machine type, the number of workers, and the number of parameter servers 1

To speed up the training of an LSTM-based model on AI Platform, one should modify the scale-tier parameter to use a higher tier or a custom configuration that provides more computational resources, such as more CPUs, GPUs, or TPUs. This can reduce the training time by increasing the paral lelism and throughput of the model training. However, one should also consid er the trade-off between the training time and the cost, as higher tiers or custom configurations may incur higher charges 2

The other options are not as effective or may have adverse effects on the model accuracy. Modifying the epochs parameter, which specifies the number of times the model sees the entire dataset, may reduce the training time, but also affect the model’s convergence and performance. Modifying the batch size parameter, which specifies the number of examples per batch, may affect the model’s stability and generalization ability, as well as the memory usage and the gradient update frequency. Modifying the learning rate parame ter, which specifies the step size of the gradient descent optimization, may affect the model’s convergence and performance, as well as the risk of overshooting or getting stuck in local minima 3

[References: 1: Using predefined machine types 2: Distributed training 3: Hyperparameter tuning overview, ]