Given the following PySpark code snippet in a Databricks notebook:

filtered_df = spark.read.format( " delta " ).load( " /mnt/data/large_table " ) \

.filter( " event_date > ' 2024-01-01 ' " )

filtered_df.count()

The data engineer notices from the Query Profiler that the scan operator for filtered_df is reading almost all files, despite the filter being applied.

What is the probable reason for poor data skipping?

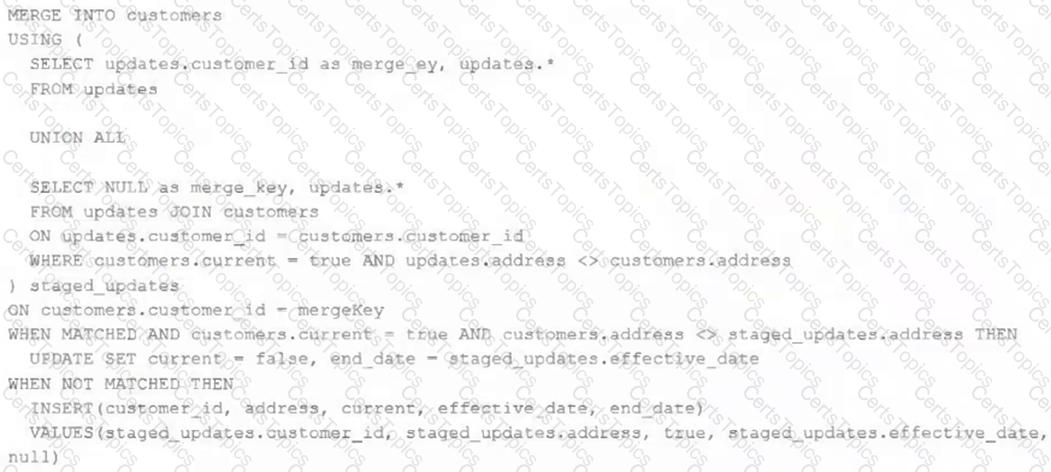

The view updates represents an incremental batch of all newly ingested data to be inserted or updated in the customers table.

The following logic is used to process these records.

Which statement describes this implementation?

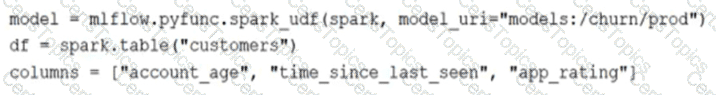

The data science team has created and logged a production using MLFlow. The model accepts a list of column names and returns a new column of type DOUBLE.

The following code correctly imports the production model, load the customer table containing the customer_id key column into a Dataframe, and defines the feature columns needed for the model.

Which code block will output DataFrame with the schema ' ' customer_id LONG, predictions DOUBLE ' ' ?

A data architect is designing a Databricks solution to efficiently process data for different business requirements.

In which scenario should a data engineer use a materialized view compared to a streaming table ?